Business Use Case Testing (BUCT)

The problem with traditional testing

Traditional testing answers: "Did the API return 200?"

BUCT answers: "Did the business outcome actually happen?"

In distributed systems, individual components can all pass their tests while the business workflow silently fails. A payment can be authorised without an invoice being generated. An order can be created without inventory being decremented. These are logical regressions — invisible to unit and integration tests, fatal to the business.

The high-speed risk gap

AI coding assistants are generating code faster than manual tests can keep up. Traditional testing becomes a bottleneck. BUCT acts as a strategic control layer that scales alongside AI-driven development.

BUCT vs traditional testing

| Dimension | Traditional testing | BUCT |

|---|---|---|

| Primary focus | Technical integrity — units, APIs, schemas | Business outcomes — workflows, rules, revenue paths |

| Core question | Is the code right? | Is the business right? |

| Failure mode caught | Crashes, schema errors, status codes | Logical regressions, silent business failures |

| Cross-service coverage | Limited | Full orchestration awareness |

| Scales with AI codegen | No — becomes a bottleneck | Yes — acts as a control layer |

| Risk level | Low-level technical risk | High-level business and brand risk |

Implementing BUCT

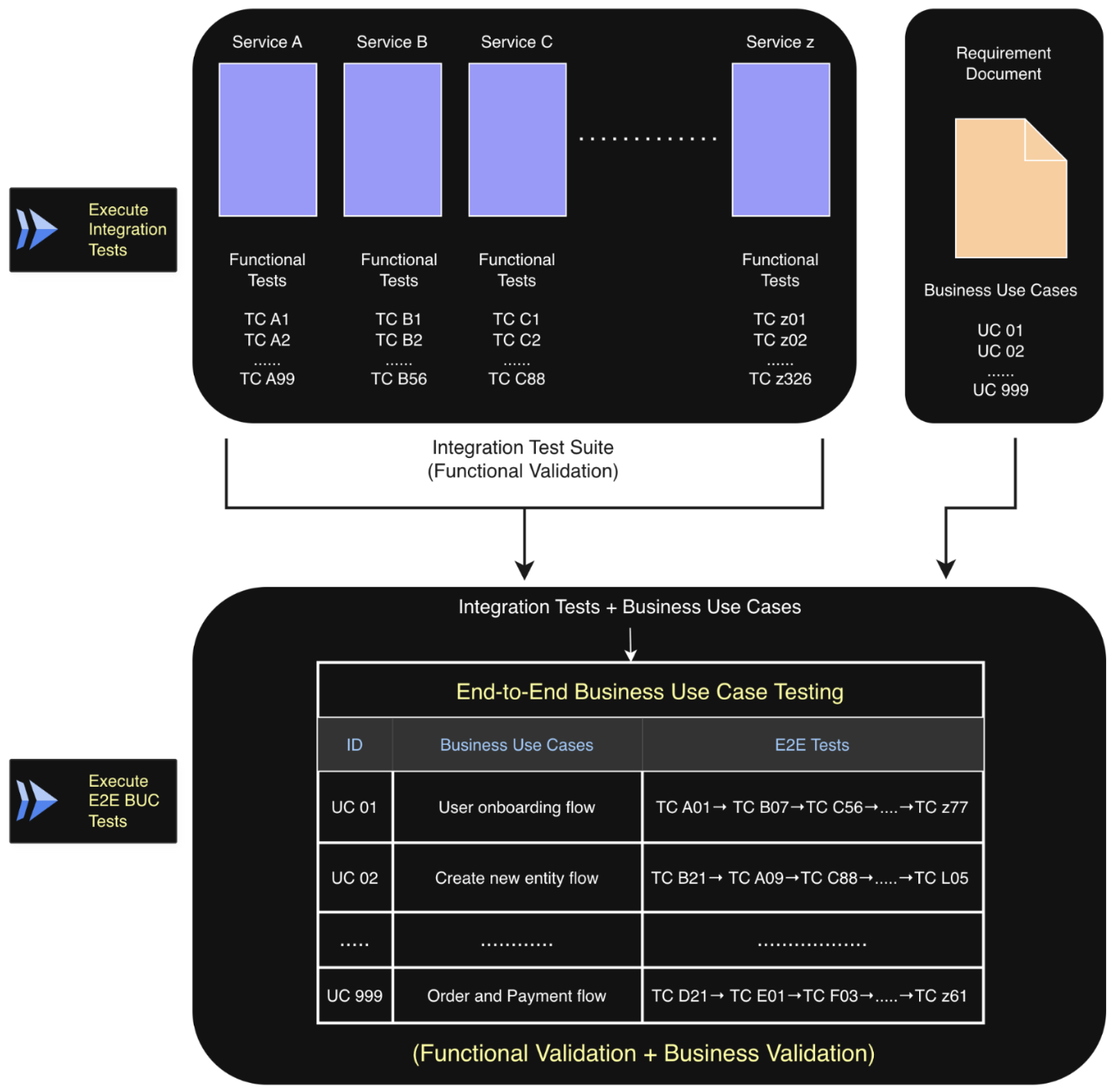

In the Quick Start section, we learnt how to get started with integration testing of your application and discussed how BaseRock tests your application on two levels, as shown in the diagram below (Integration and Business Use Case).

Now we will focus on level 2, i.e., Business Use Case Testing, with both functional and business validations.

Below is the user flow to utilise BUCT in test automation.

flowchart TD

classDef phase1 fill:#eceff1,stroke:#90a4ae,color:#263238

classDef phase2 fill:#e8eaf6,stroke:#5c6bc0,color:#1a237e

classDef phase3 fill:#e8f5e9,stroke:#43a047,color:#1b5e20

A[Login]:::phase1

A --> B[Create a business flow]:::phase2

B --> C[Upload BRD or PRD]:::phase3

C --> D[Extract use cases]:::phase3

C --> E[Add an existing service]:::phase3

D --> F[Map use case to test case]:::phase3

E --> F

F --> G[Generate playbook]:::phase2

G --> H[Execute]:::phase21. BRD ingestion

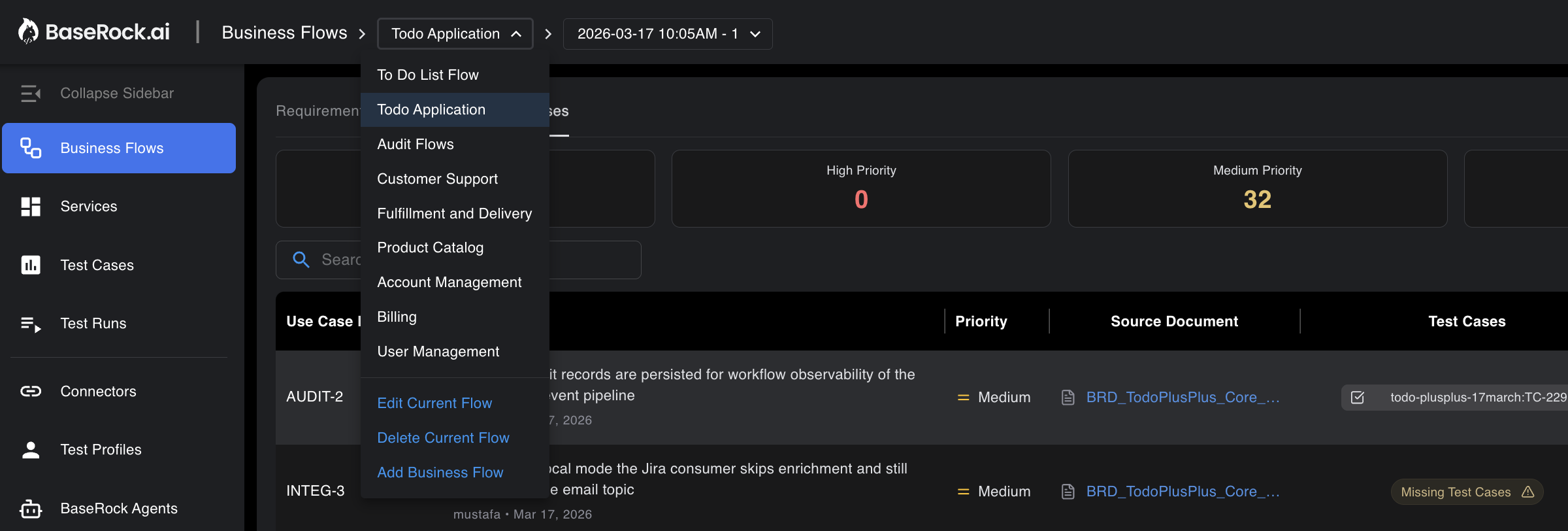

Go to the Business Flows section from the left panel and create a flow as shown in the screen below.

Upload your Business Requirement Documents (.txt or .pdf) in the Requirements tab.

2. AI-driven use case generation

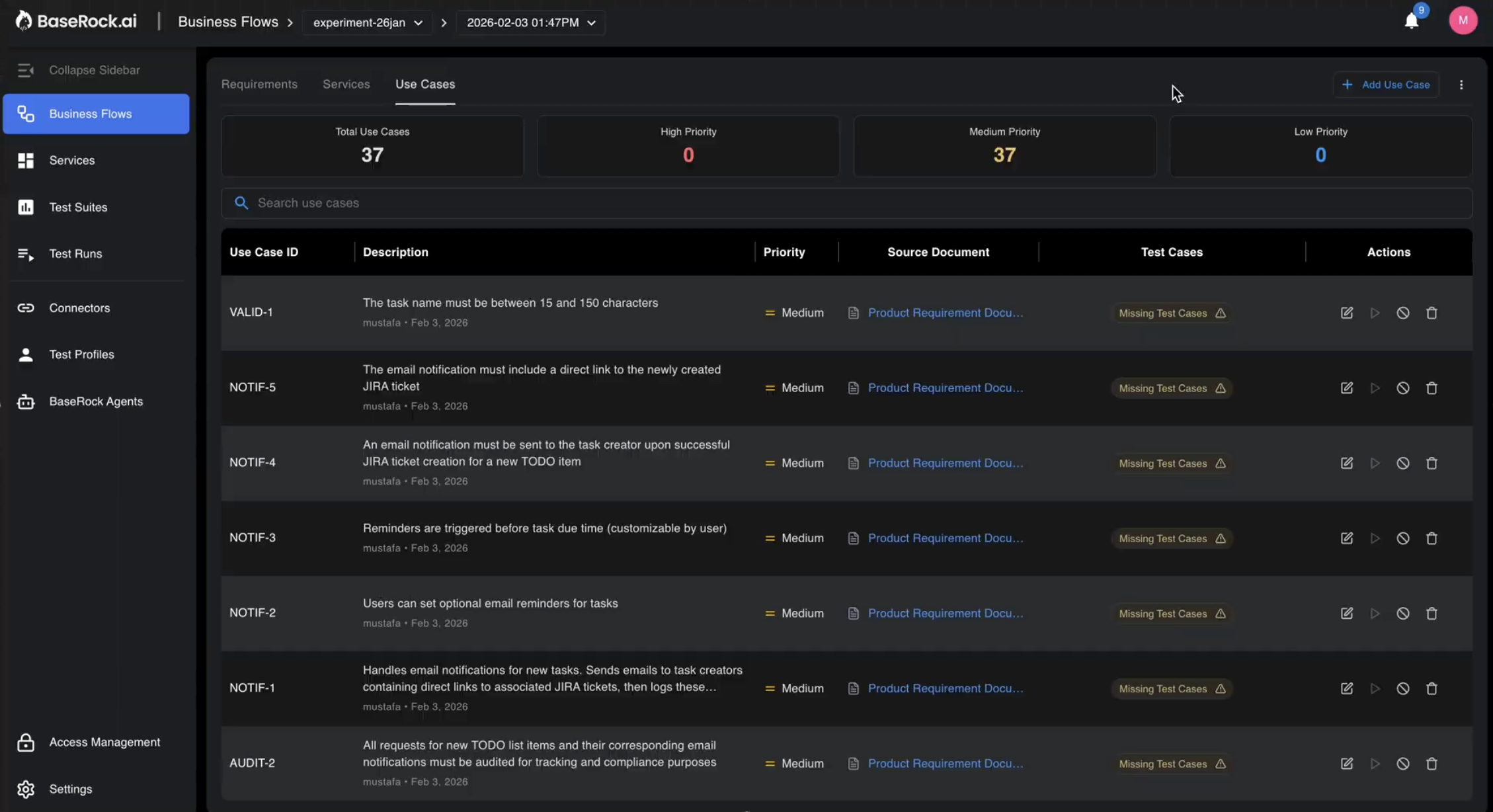

Navigate to the Use Cases tab and click Generate Use Cases. The platform parses the document, and the AI converts abstract business statements into structured, executable test scenarios as shown below.

Example

BRD input: "When a customer places an order, it should be fulfilled and invoiced."

BUCT output: A multi-step flow validating payment authorisation, inventory decrement, invoice generation, email dispatch, shipment trigger, and audit logging.

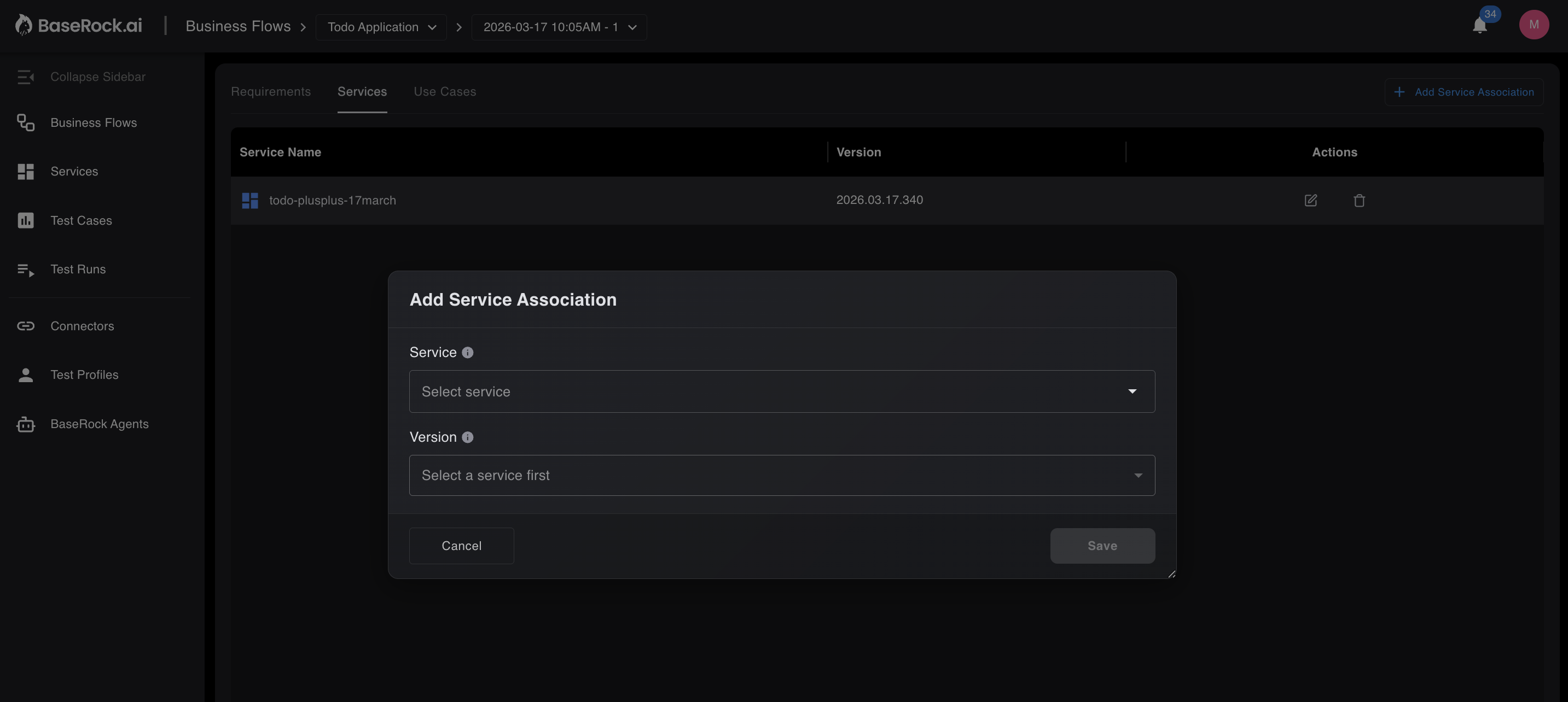

3. Add existing service

For BUCT to know the details of the actionable endpoints, the user must add an existing service on BaseRock under the Services tab within the Business Flow.

4. Requirement-to-test mapping

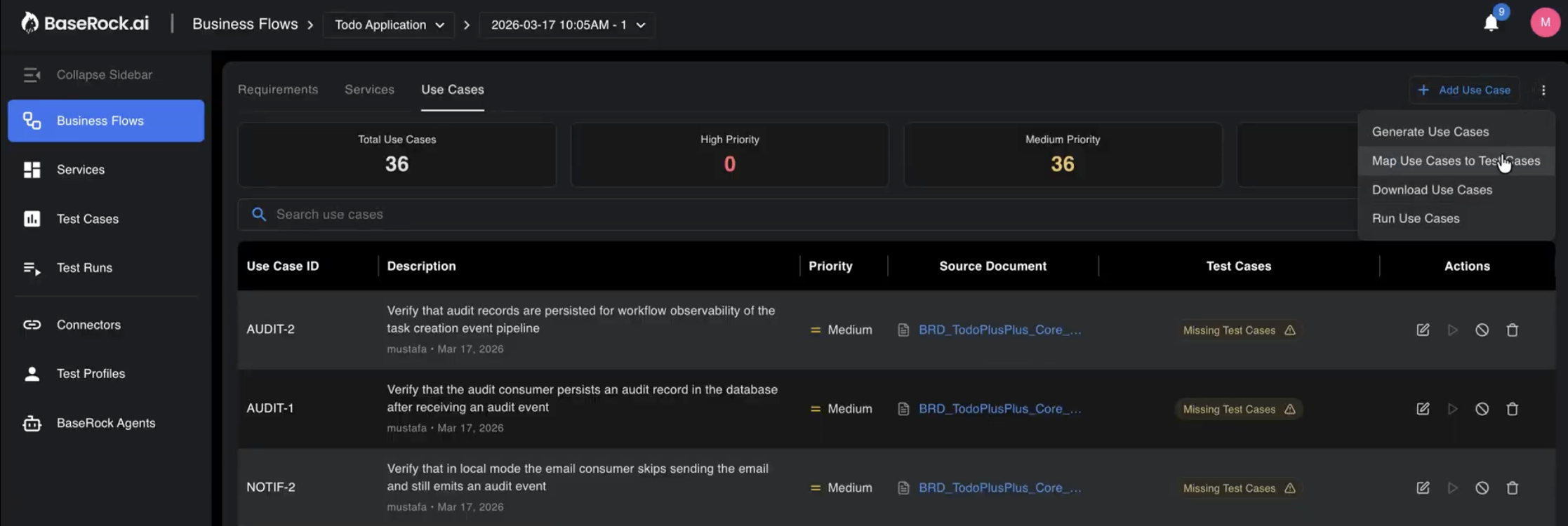

Click the three dots in the top-right corner and select Map Use Cases to Test Cases. This will generate test cases for the respective use cases. If the tests cannot be produced, they will be marked as missing test cases, showing the coverage gap between the requirement and the service under test.

If the test cases were already generated inside the service then extracted business use cases will be mapped to the relevant test cases — ensuring full cross-service coverage, not just endpoint-level coverage.

5. Playbook generation

As discussed earlier in the Quick Start section, configurations and playbooks should be created in the same way for the services under test.

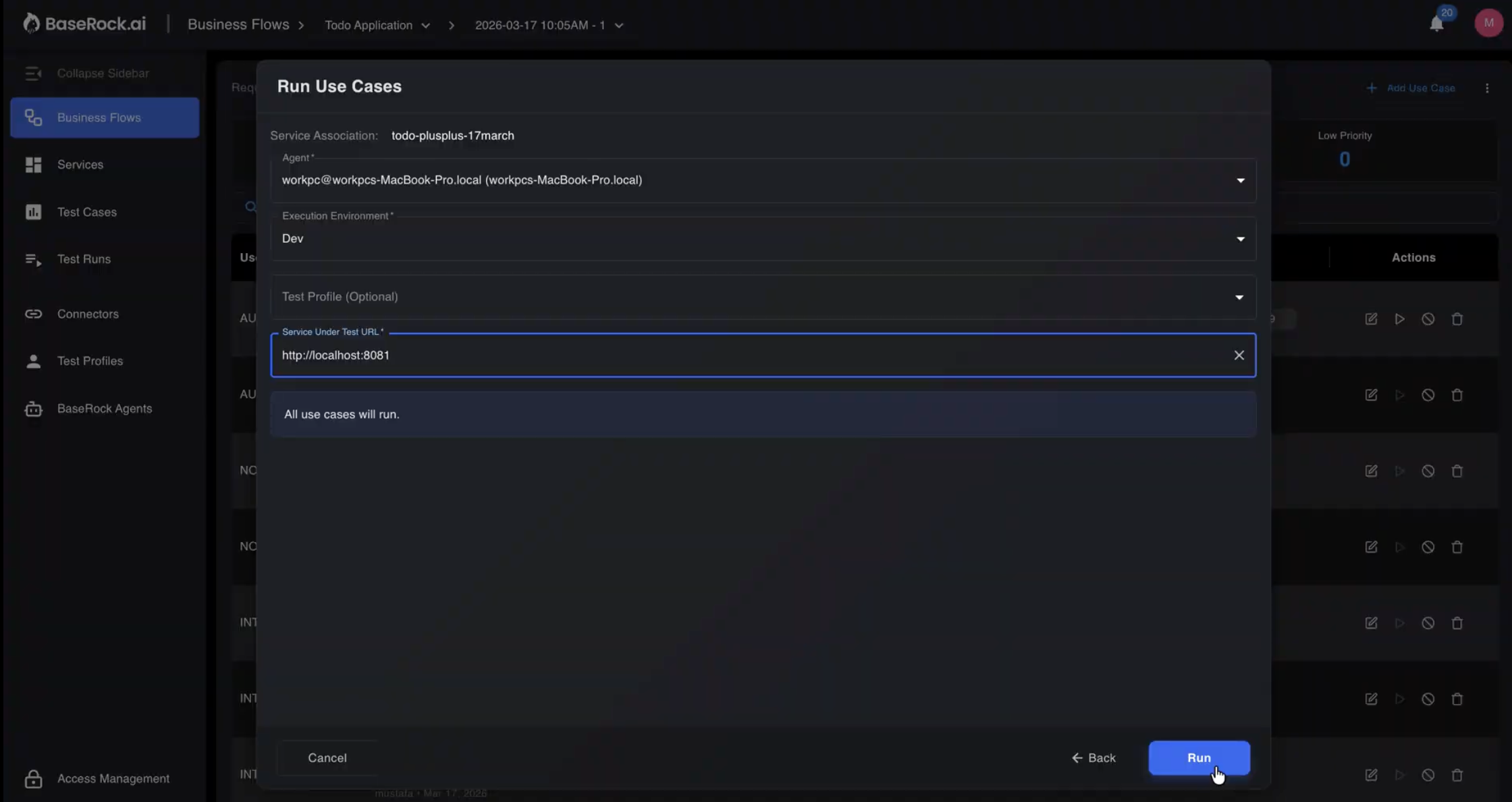

6. Agent-based execution

Once mapping is done and playbooks and configurations are set, users can start testing with the help of the BaseRock agent.

The BaseRock Agent executes deterministic end-to-end flows inside your CI/CD pipeline. Works across microservice and monolithic architectures.

To learn more about the BaseRock agent, refer to the BaseRock Agent Usage section.

BUCT Example for better understanding: eCommerce order placement

PRD statement: "When a customer places an order, it should be fulfilled and invoiced."

POST /ordersreturns HTTP 200- Response schema matches spec

- Required fields are present

It does not validate whether the business outcome actually happened.

- Payment gateway authorisation succeeded

- Inventory decremented by correct quantity

- Invoice was generated

- Confirmation email was dispatched

- Shipment workflow was triggered

- Audit logs recorded with correct timestamps and user attribution

Step 1 — Order creation

- Confirm order ID is generated

- Validate status is initialised correctly

Step 2 — Payment

- Confirm payment authorisation

- Ensure

transaction_status = SUCCESS - Assert no duplicate charges

Step 3 — Inventory

- Verify stock reduced by purchased quantity

- Assert no negative inventory state

Step 4 — Notifications

- Confirm confirmation email trigger

- Validate email contents — Order ID, pricing, delivery address

Step 5 — Shipping

- Confirm shipment record creation

- Validate correct warehouse assignment

Step 6 — Audit & compliance

- Confirm transaction log entry

- Validate timestamp and user attribution

Architecture

BUCT runs on two components:

-

Control plane

- Parses source code and PRDs

- Generates business use cases

- Maps requirements to test coverage

-

Agent

- Executes end-to-end business flows

- Handles setup and teardown per playbook

- Validates runtime-generated values (dynamic IDs, timestamps)

Why the split?

The control plane (intelligence) stays separate from the agent (execution), making BUCT portable across any CI/CD system.

Key capabilities

-

AI-driven flow generation

Use cases generated directly from PRD text — no manual scripting.

-

Cross-service orchestration

Validates workflows spanning multiple services, not just individual endpoints.

-

Playbook composability

Reusable, version-controlled playbooks reduce duplication across test suites.

-

Deterministic execution

Consistent results for AI-generated code across environments.

-

Revenue-path confidence

Prioritises validation of business-critical flows over low-level noise.